Ortelius Blog

Topics include Supply Chain Security, Vulnerability Management, Neat Tricks, and Contributor insights.

Why Post-Deployment Endpoint Detection Is Now Critical

Most security teams still treat vulnerability management as a build-time activity. But attackers don’t. Modern supply-chain attacks don’t target source code repositories or CI pipelines alone, they mainly target running systems. Production is now the real attack surface, and organizations that stop security at deployment leave their most critical infrastructure exposed.

It’s time to rethink how vulnerability detection works.

The Production Security Gap

Today’s security tooling is heavily weighted toward pre-deployment scanning:

- SAST

- SCA

- Container image scanners

- SBOM generators

- Policy enforcement tools

These tools are essential, but do not address CVEs reported after the software was deployed.

Consider this imbalance:

- 80% of open-source vulnerabilities are discovered before deployment

- 100% of successful supply-chain attacks occur after deployment

Security teams are protecting what they build, but not what they run. That gap is where attackers operate.

Attackers Move Faster Than Detection Pipelines

The velocity mismatch between defenders and attackers is growing rapidly. Open-source vulnerabilities have surged over the past three years, with dozens of new critical threats appearing daily, while the average remediation time still exceeds 100 days. At the same time, attackers can often weaponize newly disclosed vulnerabilities in less than 10 days, creating a dangerous response gap that traditional rescanning strategies and manual monitoring workflows simply cannot close. As AI continues to accelerate attacker capabilities, defensive detection strategies must evolve just as quickly. In fact recent reports show that an attacker can exploit a vulnerability in less than 1 day using AI.

Why Post-Deployment Security Has Lagged Behind

Pre-deployment security tools are easy to adopt, with value recognized in minutes. The steps are often, sign up → scan → see results. Post-deployment solutions are not. Traditional runtime vulnerability detection often requires:

| Phase | Activities | Timeline |

|---|---|---|

| Planning | Tool selection, architecture decisions | 2–4 weeks |

| Asset inventory | Discovery and CMDB alignment | 4–12 weeks |

| Deployment | Agent rollout and integration | 4–8 weeks |

| Credential configuration | Authenticated scanning setup | 2–6 weeks |

| Tuning | Policy adjustments and noise reduction | 4–8 weeks |

| Operationalization | Dashboards and workflows | 4–12 weeks |

This complexity limits adoption to large enterprises, and leaves smaller teams exposed.

Agents and Agentless Models Both Fall Short

Traditional endpoint detection architectures rely on two approaches:

Agent-Based Detection

Challenges include:

- Operational overhead

- Installation complexity

- Inventory tracking burden

- Limited suitability for edge, air-gapped, HPC, or on-orbit systems

Agentless Detection

Challenges include:

- Reduced runtime visibility

- Weak container awareness

- Dependence on network scans and control-plane APIs

- Difficulty tracking ephemeral infrastructure

Neither model fully solves runtime supply-chain exposure.

A New Approach: The Deployment Digital Twin

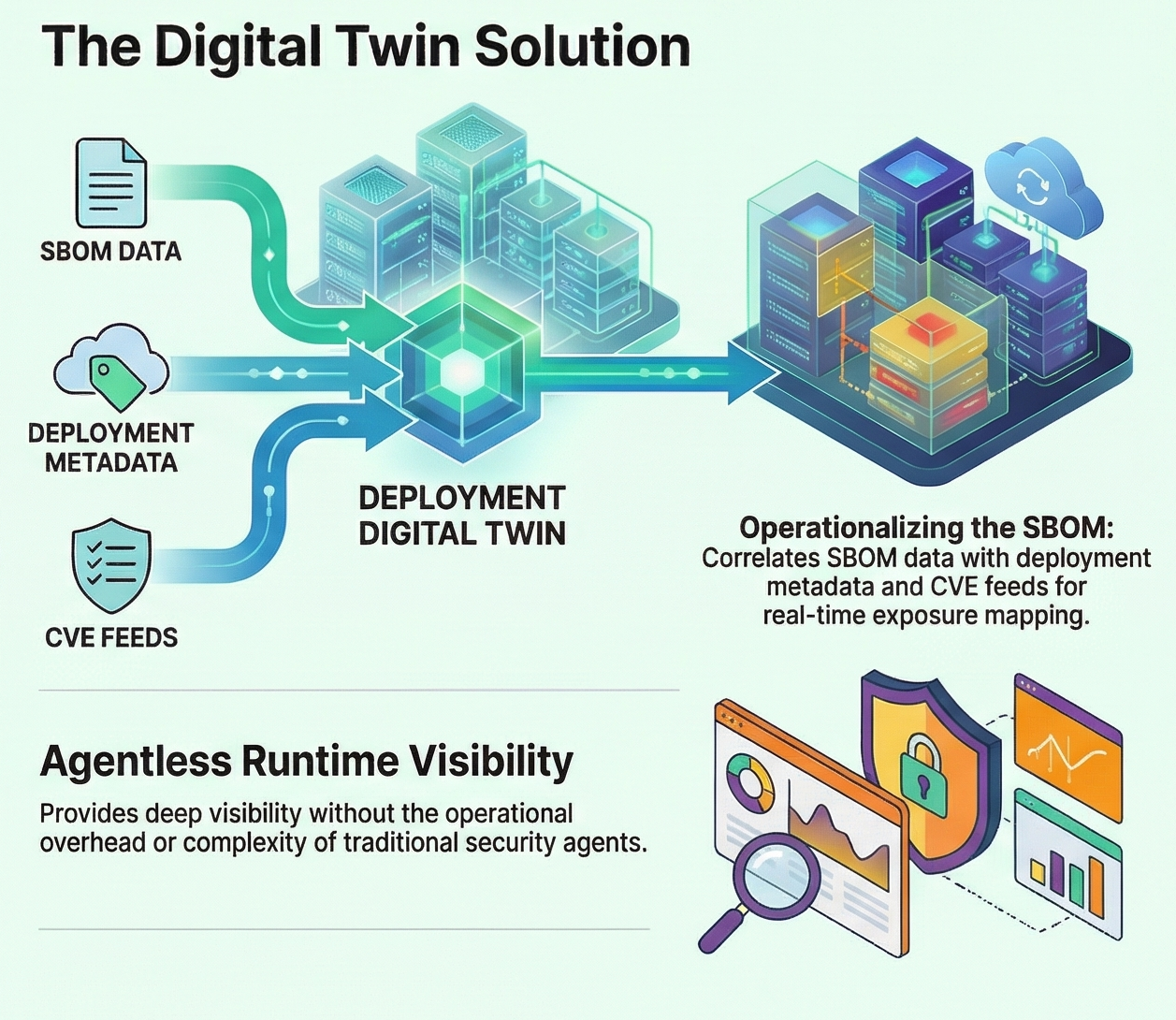

A better model is emerging for post-deployment vulnerability detection: the deployment digital twin.

Instead of repeatedly scanning endpoints, the digital twin treats SBOMs as operational telemetry and correlates them with deployment metadata for every release. This allows organizations to map software components directly to live endpoints and continuously synchronize exposure against vulnerability intelligence feeds. As new CVEs are disclosed, affected runtime systems can be identified immediately. The result is a shift from periodic scanning cycles to continuous vulnerability correlation aligned with what is actually running in production.

Making SBOMs Operational

SBOMs are often generated to satisfy compliance requirements and then archived as documentation artifacts. In a digital twin architecture, they become something far more valuable: live inputs to runtime security intelligence.

When combined with deployment metadata, SBOMs create a continuous feedback loop:

SBOM → Deployment Metadata → Digital Twin → CVE Sync → Response

This architecture makes it possible to determine exactly where vulnerable packages are running, prioritize only the high-risk vulnerabilities that affect deployed systems, and dramatically reduce alert fatigue. Instead of rescanning environments repeatedly, organizations gain continuous endpoint-aware detection and the ability to automate remediation workflows directly through their software delivery pipelines.

How the Digital Twin Model Works in Practice

A deployment digital twin continuously correlates SBOM data from builds with deployment metadata from sources such as Kubernetes audit logs, Helm releases, and pipeline execution outputs. It synchronizes this evidence with vulnerability intelligence feeds like OSV.dev and immediately identifies newly disclosed vulnerabilities impacting live systems.

Because exposure is mapped directly to deployed components, remediation can be automated. Pull requests can be generated to update dependency files, while issues can be opened when upgrades introduce breaking changes. This creates a closed feedback loop between runtime exposure and developer action, allowing teams to respond faster and with greater precision.

Faster Remediation With Less Noise

Traditional scanners frequently generate hundreds—or even thousands—of vulnerability alerts, many of which are not actionable because they do not affect running systems. A digital twin approach changes this by focusing only on deployed components, reachable attack surface, and high-risk vulnerabilities that matter operationally.

The result is reduced alert fatigue, faster prioritization, and remediation timelines that shrink from months to days. Most importantly, the Ortelius team is currently working on the implementation of AI to auto-remediate dependency file fixes that can be pushed quickly back through the software factory, ensuring vulnerability response becomes part of normal delivery workflows rather than a separate security burden.

Designed for Modern and Mission-Critical Environments

Digital twin architectures are particularly effective in environments where traditional agents are difficult or impossible to deploy, including cloud platforms, edge infrastructure, HPC clusters, air-gapped systems, and satellite or on-orbit deployments. They also align naturally with emerging regulatory expectations and federal cybersecurity guidance, including EO 14028, NIST SSDF (800-218), NIST container security guidance (800-190), and Continuous Authority to Operate (cATO) initiatives. For government and regulated sectors, runtime vulnerability awareness is quickly becoming a requirement rather than a best practice.

A Simpler Path to Runtime Security

Historically, post-deployment detection required complex enterprise rollouts and extensive operational coordination. The digital twin model changes that by leveraging existing SBOM pipelines and deployment metadata already produced by modern software factories.

Modern implementations install quickly, require no endpoint agents, and deliver meaningful runtime exposure insights in minutes. Security teams gain visibility into deployed risk without disrupting developer workflows or introducing additional infrastructure overhead.

Production Is the New Attack Surface

Security does not end at deployment. It begins there. Organizations that want to reduce exposure to software supply-chain threats must continuously monitor deployed components, operationalize SBOM intelligence, correlate vulnerabilities against live endpoints, prioritize runtime attack surface risk, and automate remediation wherever possible. Deployment digital twins make this approach achievable at scale.

The future of supply-chain security isn’t just scanning what you build. It’s defending what you run.

Get Involved

If you’re interested in advancing post-deployment vulnerability detection, consider participating in the Ortelius open-source efforts driving this architecture forward. We welcome your insights and participation.